AI Generated Images Blur Lines Of Consent

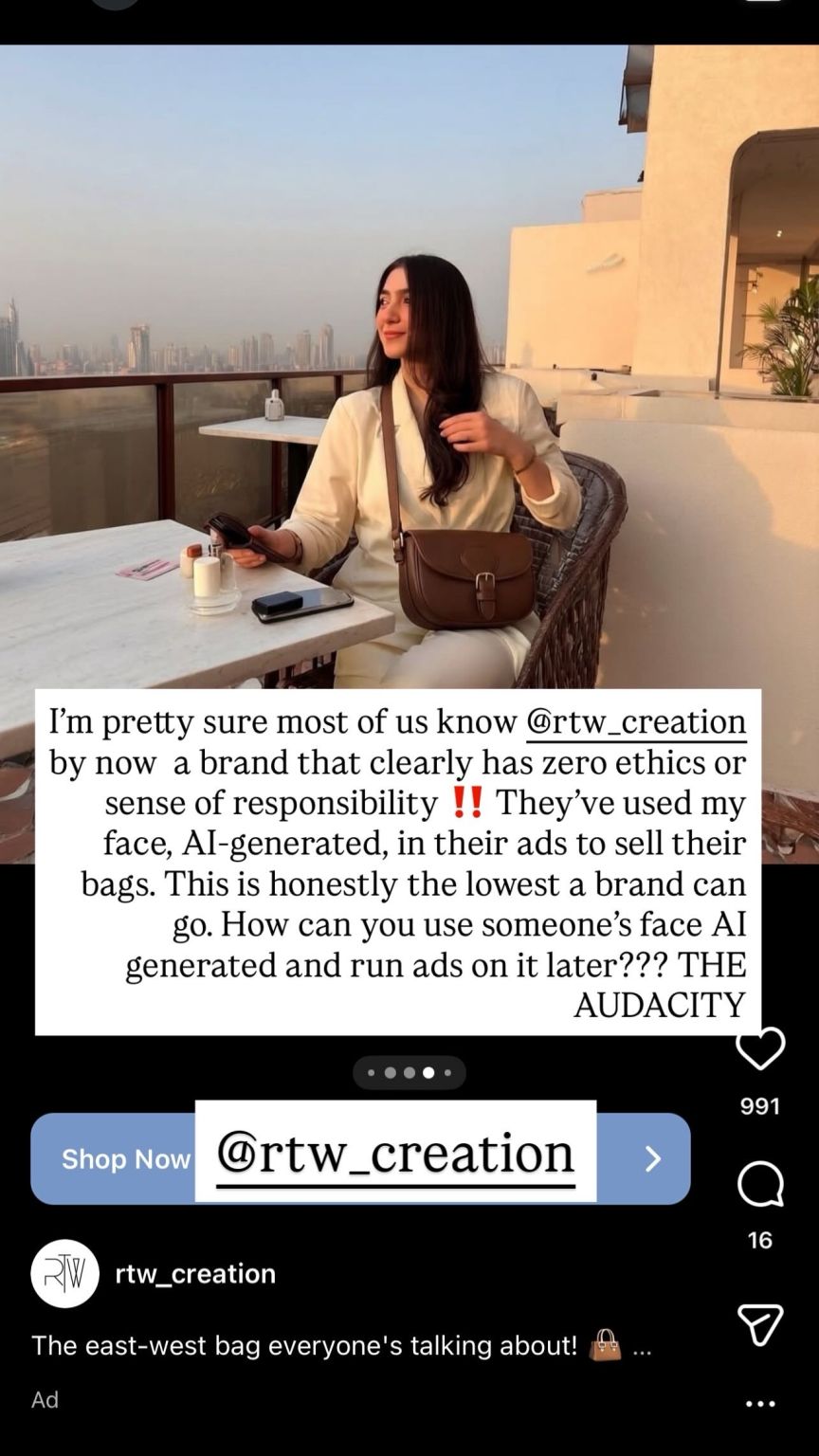

Earlier this week, social media manager Aresha Shaharyar Syed, took to Linkedin to call out RTW creations, an online brand for handbags. Syed posted pictures of ads shared by the brand on social media, claiming that they had used an AI-generated likeness of her without her consent, and that she’d only posted her concerns publicly after not receiving a concrete response from the CEO or team.

Just a few days before Syed’s experience, digital creator Ayesha Tariq also took to Instagram with a similar story. Last Thursday, she posted a story talking about how clothing brand Engine had used her image likeness in a campaign despite the fact that she’d never modelled for that campaign or consented to her images being used. Following her public call-out, the brand deleted those posts. Tahir used this experience to point out that it wasn’t just about one campaign or brand but rather about respecting consent, even when it came to AI generated content.

She’s raised an important point in a world where AI generated content is becoming increasingly common, and along with it, so are the threats it poses to understanding consent in using someone’s image and likeness. Over the last few years, AI generated content that mimics people’s likeness or alters them in some way has become very common, and it comes with a significant number of harms. Between 2019 and 2024, estimates suggest that the number of deepfake videos increased by 550%. Many times the content created with someone’s likeness is meant to do direct harm. At the end of last year, X’s AI Grok came under fire for creating pictures of women in bikinis without their consent, with numerous cases of the AI creating sexualised images of women and minors.

While public figures are the most likely victims, with celebrities targeted 47 times by AI generated impersonations, in early 2025 alone, the ease of use of many generative AI platforms means anyone can be subject to this now. This new risk and usage raises important questions about what is acceptable within generative AI and what isn’t.

In both Syed’s and Tariq’s cases, the important thing to note is that the issue was of consent rather than harm or defamation. In both cases the content created was seemingly harmless, and both brands must have put little to no thought about the impact this can have on the people whose likeness they are using. In doing so, they left us with an important lesson: AI generated content doesn’t need to intend harm or defamation in order to be problematic. It raises more nuanced conversations about why no one should be able to generate content that represents someone else if their consent is not given, as such actions muddy the waters when it comes to personal decisions about how a person may want to be depicted publicly.

Hera Husain, founder of Chayn, A global tech non-profit empowering women & marginalised genders facing abuse, has focused much of her work in empowering vulnerable groups through her platform and fighting the kind of abuse that comes with this misuse of technology including AI.

“Everyone should have the right to their own image. Companies cannot use a model's face as they wish without consent. Generative AI creates a lucrative environment for abuse and exploitation - whether that is an ex-husband trying to blackmail their partner to come back to them or a company that doesn't want to pay a model for their labour. Industry regulators need to ban this practice,” she tells Echoes.

With so many new ethical questions coming up as we navigate these new technologies, platforms are also addressing these issues. YouTube is introducing likeness detection technology to help creators identify and take down unauthorized use of their image. The feature lets creators submit a selfie video and ID for verification, enabling YouTube’s system to flag newly uploaded videos that may include altered or AI-generated versions of their face. But Big Tech has never had a great history of fighting for the little man, so it remains to be seen whether or not this will actually protect individuals.

Laws around AI usage and generation also still remain vague in many places, with different circumstances coming into play when it comes to asking whether you can sue someone just for generating an AI likeness of you.

Until legislation and implementation globally begin to protect victims from unethical and problematic AI use, we need to keep calling out this kind of us of AI generated content and educate ourselves and our communities on whether we should at all be using AI to generate such content in the first place.

Anmol Irfan is a freelance journalist, editor and the co-founder of Echoes Media. Her work focuses on marginalised narratives in the Global South, looking at gender, climate, tech and more. She tweets @anmolirfan22

Member discussion